Are you a developer or just a curious individual who wants to dip their toes in the Cloud, but are not sure whether to go for OpenShift Local or Single Node OpenShift? You’ve come to the right place. In this article, I will explore the differences and similarities between these two variants of OpenShift, so you can see which one is right for you. I will also share my own preferences and reasons why.

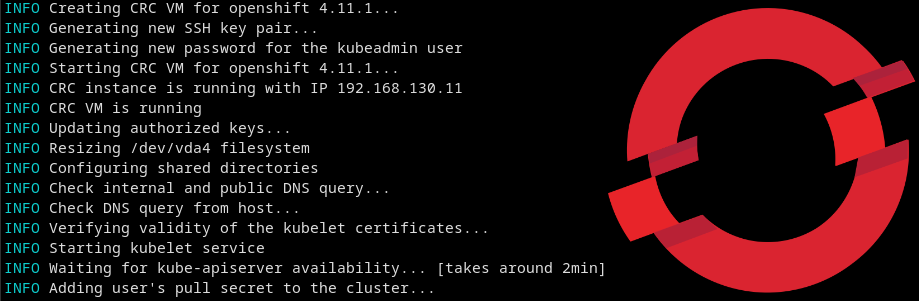

OpenShift, a developer-friendly Kubernetes distribution comes in several flavours, including a very easy to use variant called OpenShift Local, the artist formerly known as Code Ready Containers. It runs Windows, Mac (Intel only, for now), and of course, Linux.

OpenShift Local

OpenShift Local allows you to run an instance of a highly opinionated preconfigured OpenShift instance on your local machine, without a lot of fuss. It doesn’t need all that much in terms of hardware, memory and storage. You can easily run it on a reasonably kitted out laptop or desktop. Its minimum requirements are:

- 4 physical CPU cores

- 9 GB of free memory

- 35 GB of storage space

You can configure it to use more CPUs, memory and storage, if your particular scenario requires it.

Here are some of the highlights of OpenShift Local:

- Batteries included – just start it up and use it.

- Local storage pre-configured.

- Includes a VM – this allows it to run on a wide variety of underlying hardware. Alternatively, it can be run in podman for a more resource friendly installation.

- Fixed cluster name (crc.local) – you cannot change this.

- Does not talk to anything outside the host it is running on – this can be worked around, but it is generally not recommended.

- Cannot be upgraded without a reinstall – it will be wiped clean between upgrades.

- Can recover from expired internal certificates – this is a very nice feature if you only use it sporadically, or go on holidays for a few weeks!

While the data does not persist between updates, the settings do. So, if you’ve configured OpenShift Local to run in a VM with 24GB RAM and 8 vCPUs, it will continue to do so, the next time you install it.

I have a Lenovo P1 laptop with 64GB RAM and a hexacore Intel i7, and have configured OpenShift Local to use 32GB RAM and 6 vCPU. I have also enabled cluster logging, which is not enabled by default, and consumes additional resources. It will happily run things like OpenShift GitOps, Pipelines, and Serverless. Obviously, the more you want to run on it, the more resources you will need to assign to it. The flexibility is very nice.

Single Node OpenShift

Single Node OpenShift, also known as SNO, is a specific configuration of a regular OpenShift installation. It has a single control plane node that has been configured to also allow regular loads to be run on it. It is targeted at Edge locations where an easy to manage container platform is desirable, but resources are limited.

Here are some of the highlights of Single Node OpenShift:

- Requires installation via openshift-installer or Assisted Installer, an easy to use installation wizard on Red Hat’s OpenShift Cluster Manager site.

- Local storage needs to be configured using ODS LVM Operator.

- Name can be whatever you like, you’re not stuck with a fixed cluster name. I called mine “blackslab”, since the case it lives in is very large, and, you guessed it, black.

- If installed on baremetal, can be connected directly to the LAN, or even Internet, although I would be hesitant to do the latter, unless you’re very sure of what you’re doing.

- Is upgradeable to newer releases of OpenShift without dataloss, so you can always run the latest version of OpenShift that is available.

- Can be managed centrally through Red Hat’s Advanced Cluster Manager.

- Needs to be kept running to avoid certificate experation. You can’t pause and forget about this installation for a few weeks, and then start it up again, and hope for it to all work flawlessly. If you’re lucky, it will work, if not, you’ll have to reinstall your cluster.

- Is eligible for official support, if you have the right entitlements.

Single Node OpenShift also allows you to add additional worker nodes to your cluster, through OpenShift Cluster Manager, should you chose to do so.

If you want to use Persistent Volumes, you’ll need an additional disk, an SSD, preferably, and configre ODS LVM Operator to use it. This can seem intimidating at first, but it’s not as hard as it sounds. I am planning to write a guide on how to configure SNO for a home lab, with all the bells and whistles, in the near future.

I have Single Node OpenShift running on an aging quad-core i7 running at 4GHz with 32GB RAM and 2 SSDs. The first SSD has the OS and data for the standard operators, the second has been configured to be used by the LVM Operator. Like my OpenShift Local installation, it is quite happy to run OpenShift GitOps, Pipelines and other operators. I also use it to tinker with the Certificate Manager. I have a hosted DNS zone in AWS, that has entries that map to a private IP address on my network. This allows other machines on my network to resolve it, without having to add a DNS server in my own network. AWS charges me about 1 to 3 Euro per month, depending on how much I’ve fiddled with it. I use the AWS free tier.

When to chose which kind?

If you’re looking for a quick to install, no fuss OpenShift experience, just to test your apps on, on your laptop or desktop, OpenShift Local is the one for you. If you’re after more configurability, an experience closer to a “real” cluster, and are willing to put in the extra work required to do so, Single Node OpenShift may be a better choice. Using OpenShift Certificate Manager Operator, you can configure your own certificates for your cluster.

Personally, I am a big fan of SNO, and if you have a spare machine, or a laptop or desktop with enough RAM and disk space to run a VM with the specifications required for it, it’s by far the best “small” OpenShift experience.